RAID level 별 성능 테스트

회사에 어떤분이 “raid 6에서는 write 일 때 쓰기를 3번해서 io가 1/3이 된다.!” 라고해서 이 test를 시작하기 되었다.

다행이도 회사에 disk 12 bay짜리 array가 있고, 놀고있는 server도 있었다.

주말전에 2일 휴가도 내서 시간도 충분했다.

test case는

Disk : 2 ~ 12

Raid : 0, 10, 5, 6

R/W : read, write, R70:W30

이렇게해서 총 312개 case의 test를 하였다.

일단 server와 HBA(disk controller), disk array의 spec을 알아보면…

1. server

PowerEdge R620

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Byte Order: Little Endian

CPU(s): 24

On-line CPU(s) list: 0-23

Thread(s) per core: 2

Core(s) per socket: 6

Socket(s): 2

NUMA node(s): 2

Vendor ID: GenuineIntel

CPU family: 6

Model: 45

Model name: Intel(R) Xeon(R) CPU E5-2620 0 @ 2.00GHz

Stepping: 7

CPU MHz: 1200.000

BogoMIPS: 4004.06

Virtualization: VT-x

L1d cache: 32K

L1i cache: 32K

L2 cache: 256K

L3 cache: 15360K

NUMA node0 CPU(s): 0,2,4,6,8,10,12,14,16,18,20,22

NUMA node1 CPU(s): 1,3,5,7,9,11,13,15,17,19,21,23

memory 8 x 22 = 176G

Memory Device

Array Handle: 0x1000

Error Information Handle: Not Provided

Total Width: 72 bits

Data Width: 64 bits

Size: 8192 MB

Form Factor: DIMM

Set: 1

Locator: DIMM_A4

Bank Locator: Not Specified

Type: DDR3

Type Detail: Synchronous Registered (Buffered)

Speed: 1600 MHz

Manufacturer: 00CE00B300CE

Serial Number:

Asset Tag: 03132463

Part Number: M393B1G70BH0-YK0

Rank: 1

Configured Clock Speed: 1333 MHz

2. HBA

Name : PERC H810 Adapter

Slot ID : PCI Slot 1

State : Ready

Firmware Version : 21.3.2-0005

Minimum Required Firmware Version : Not Applicable

Driver Version : 06.811.02.00-rh1

Minimum Required Driver Version : Not Applicable

Storport Driver Version : Not Applicable

Minimum Required Storport Driver Version : Not Applicable

Number of Connectors : 2

Rebuild Rate : 30%

BGI Rate : 30%

Check Consistency Rate : 30%

Reconstruct Rate : 30%

Alarm State : Not Applicable

Cluster Mode : Not Applicable

SCSI Initiator ID : Not Applicable

Cache Memory Size : 1024 MB

Patrol Read Mode : Auto

Patrol Read State : Stopped

Patrol Read Rate : 30%

Patrol Read Iterations : 0

Abort Check Consistency on Error : Disabled

Allow Revertible Hot Spare and Replace Member : Enabled

Load Balance : Auto

Auto Replace Member on Predictive Failure : Disabled

Redundant Path view : Not Applicable

CacheCade Capable : Yes

Persistent Hot Spare : Disabled

Encryption Capable : Yes

Encryption Key Present : No

Encryption Mode : None

Preserved Cache : Not Applicable

Spin Down Unconfigured Drives : Disabled

Spin Down Hot Spares : Disabled

Spin Down Configured Drives : Disabled

Automatic Disk Power Saving (Idle C) : Disabled

Start Time (HH:MM) : Not Applicable

Time Interval for Spin Up (in Hours) : Not Applicable

T10 Protection Information Capable : No

Non-RAID HDD Disk Cache Policy : Not Applicable

3. OS

[root@testlab1 youngju]# lsb_release -a

LSB Version: :core-4.1-amd64:core-4.1-noarch

Distributor ID: RedHatEnterpriseServer

Description: Red Hat Enterprise Linux Server release 7.3 (Maipo)

Release: 7.3

Codename: Maipo

[root@testlab1 youngju]# uname -a

Linux testlab1 3.10.0-514.10.2.el7.x86_64 #1 SMP Mon Feb 20 02:37:52 EST 2017 x86_64 x86_64 x86_64 GNU/Linux

[root@testlab1 youngju]#

performance test는 vdbench를 이용해서 하였다.

vdbench script

sd=sd1,lun=/dev/sdc

wd=wd1,sd=sd1,rdpct=100,xfersize=512

rd=run1,wd=wd1,iorate=max,elapsed=120,interval=5,openflags=o_direct,forrdpct=(100,0,70),forxfersize=(512,4096)

4. disk 1TB, 7200rpm sas

ID : 1:0:11

Status : Ok

Name : Physical Disk 1:0:11

State : Online

Power Status : Spun Up

Bus Protocol : SAS

Media : HDD

Part of Cache Pool : Not Applicable

Remaining Rated Write Endurance : Not Applicable

Failure Predicted : No

Revision : GS0A

Driver Version : Not Applicable

Model Number : Not Applicable

T10 PI Capable : No

Certified : Yes

Encryption Capable : No

Encrypted : Not Applicable

Progress : Not Applicable

Mirror Set ID : Not Applicable

Capacity : 931.00 GB (999653638144 bytes)

Used RAID Disk Space : 1.20 GB (1288437760 bytes)

Available RAID Disk Space : 929.80 GB (998365200384 bytes)

Hot Spare : No

Vendor ID : DELL(tm)

Product ID : ST1000NM0023

Serial No. :

Part Number :

Negotiated Speed : 6.00 Gbps

Capable Speed : 6.00 Gbps

PCIe Negotiated Link Width : Not Applicable

PCIe Maximum Link Width : Not Applicable

Sector Size : 512B

Device Write Cache : Not Applicable

Manufacture Day : 07

Manufacture Week : 29

Manufacture Year : 2013

SAS Address : 5000C50056FBA12D

Non-RAID HDD Disk Cache Policy : Not Applicable

Disk Cache Policy : Not Applicable

Form Factor : Not Available

Sub Vendor : Not Available

ISE Capable : No

[root@testlab1 youngju]# omreport storage pdisk controller=1

test에 사용된 shell script

[root@testlab1 youngju]# grep -iv ‘^#[a-zA-Z]’ create-vdisk.sh

#!/bin/bash

RAID=${1}

DISKN=`echo $(($2-1)) `

PDISK=`seq -s “,” -f 1:0:%g 0 $DISKN`

SIZE=$3

echo -e “\e[93m———– dell omsa RAID=$RAID PDISK=$2 vdisk create ———–\e[0m”

omconfig storage controller action=createvdisk controller=1 raid=r${RAID:=0} size=${SIZE:=5g} pdisk=${PDISK:=1:0:0,1:0:1} stripesize=64kb readpolicy=nra writepolicy=wt name=yj-r${RAID}-${2}disk

if [ $? = 0 ]; then

echo -e “\e[93m———– dell omsa RAID=$RAID PDISK=$2 vdisk create done ———–\e[0m”

else

echo -e “\e[91m———– dell omsa RAID=$RAID PDISK=$2 vdisk create fail ———–\e[0m”

exit 1

fi

[root@testlab1 youngju]# grep -iv ‘^#[a-zA-Z]’ delete-vdisk.sh

#!/bin/bash

VDISK=$1

VNAME=`bash status-vdisk.sh |grep -i name |head -n1|awk ‘{print $3}’`

echo -e “\e[93m———– dell omsa vdisk ${VNAME:=no vdisk} delete ———–\e[0m”

omconfig storage vdisk action=deletevdisk controller=1 vdisk=${VDISK:=1}

echo -e “\e[93m———– dell omsa vdisk ${VNAME:=no vdisk} delete done ———–\e[0m”

[root@testlab1 youngju]# grep -iv ‘^#[a-zA-Z]’ status-vdisk.sh

#!/bin/bash

omreport storage vdisk controller=1 vdisk=1

[root@testlab1 youngju]# grep -iv ‘^[[:space:]]*#\|^$’ raid-test.sh

#!/bin/bash

RSTD=test-result-`date +%Y%m%d-%H%M`

RSTD512=test-512-result-`date +%Y%m%d-%H%M`

RSTDCACHE=test-cache-result-`date +%Y%m%d-%H%M`

mkdir $RSTD512

for R in 0 10 5 6

do

for D in `seq 2 12`

do

sleep 2

bash delete-vdisk.sh

sleep 2

bash create-vdisk.sh $R $D 12g

if [ $? = 0 ] ; then

sleep 3

omconfig storage vdisk action=slowinit controller=1 vdisk=1

sleep 2

while [ `bash status-vdisk.sh |grep -i state|head -n1 |awk ‘{print $3}’` != Ready ]

do

echo -e “\e[91m —– vdisk is initializing ——–\e[0m”

bash status-vdisk.sh |grep -i progress

sleep 5

done

echo

echo -e “\e[93m —– vdisk is initialized ——–\e[0m”

sleep 1

vdbench/vdbench -f youngju-test.param-512 -o ${RSTD512}/raid${R}-disk${D} -w 10

sleep 1

else

echo vdisk create fail raid $R disk $D

echo

fi

echo -e “\e[96m———– test raid $R disk $D done ————\e[0m”

echo

echo

done

done

[root@testlab1 youngju]#

test는 최대한 cache effect를 타지 않게 하였다.

test 결과는 다음과 같다.

io/cache raid disk R/W iops

512 raid 0 2 read 514.26

512 raid 0 3 read 677.07

512 raid 0 4 read 830.22

512 raid 0 5 read 947.13

512 raid 0 6 read 1027.46

512 raid 0 7 read 1108.98

512 raid 0 8 read 1121.44

512 raid 0 9 read 1207.18

512 raid 0 10 read 1265.49

512 raid 0 11 read 1286.46

512 raid 0 12 read 1335.8

512 raid 10 4 read 815.64

512 raid 10 6 read 975.67

512 raid 10 8 read 1119.82

512 raid 10 10 read 1212.33

512 raid 10 12 read 1303.5

512 raid 5 3 read 635.9

512 raid 5 4 read 783.8

512 raid 5 5 read 911.86

512 raid 5 6 read 1010.51

512 raid 5 7 read 1074.68

512 raid 5 8 read 1145.63

512 raid 5 9 read 1187.66

512 raid 5 10 read 1242.5

512 raid 5 11 read 1289.53

512 raid 5 12 read 1310.89

512 raid 6 4 read 729.6

512 raid 6 5 read 850.5

512 raid 6 6 read 983.22

512 raid 6 7 read 1053.27

512 raid 6 8 read 1126.92

512 raid 6 9 read 1179.58

512 raid 6 10 read 1217.82

512 raid 6 11 read 1274.77

512 raid 6 12 read 1296.48

더 많은데… 일단 것만함..

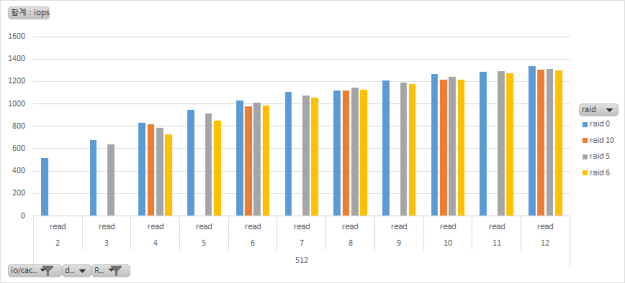

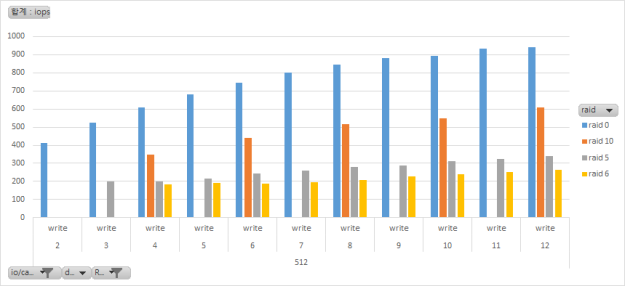

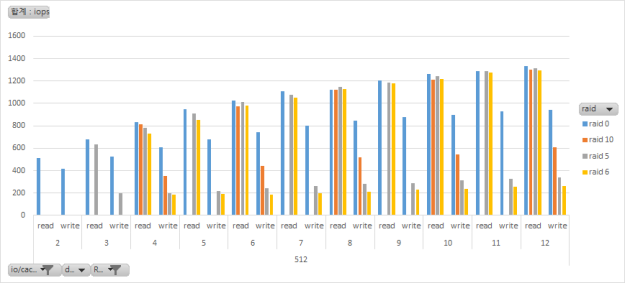

이걸 graph로 만들어 보면…

read

write

read write 비교

대략 이와같은 결과가 나왓다.

test는 transper size 512byte 일때 4096byte 일때 HBA write policy를 writeback일 때 이렇게 3종류 case로 진행 했는데 비슷하더라. 512와 4096은 어차피 1개 page가 4k라서 인것같고, writeback policy로 진행한것은 약간의 성능 향상이 있었는데 결과는 비슷햇다.

Raid 당 순수 performance를 계산하는 공식이다. I/O처리하는데 있어 대기시간이 전혀 없다는 가정이다.

N=disk 갯수(8)

X=1개 disk가 낼수 있는 iops (125)

Read는 모두 parity를 뺀 NX 만큼의 iops가 나온다.

Write Raid 0 = NX = 8×125= 1000

Write Raid 10 = NX/2 = 8×125 = 500

Write Raid 5 = NX/4 = 8×125/4 = 250

Write Raid 6 = NX/6 = 8×125/6 = 166

아래 site 참조함.

https://www.storagecraft.com/blog/raid-performance/

raid 5는 write를 할 때 4개의 operation이 들어가게 된다. 먼저 data를 읽고, parity를 읽고, data를 쓰고, parity쓰는 4개의 operation이 일어나게 되어 4를 나눈것이고, 6은 parity가 1개 더 들어가서 6을 나눈다.

대략적인 test결과도 비슷하게 나왔다.

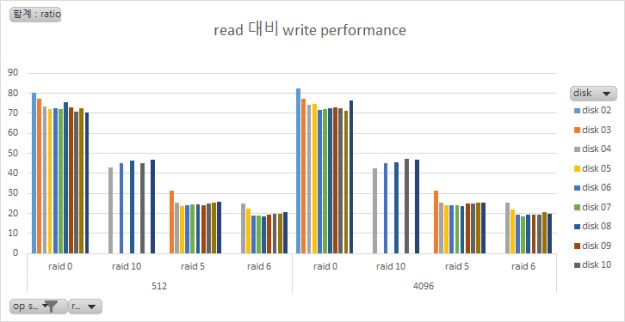

operation size 별 read 대비 write performance

결론

1. HBA의 성능이 받쳐주는 이상 disk를 늘리면 늘릴 수록 I/O의 성능은 올라간다. storage vendor에 물어보니까. HBA의 cache size에 따라서 몇개의 disk까지 수용 할 수 있는지가 정해 진단다. test에서는 12개 까지밖에 쓸 수가 없어서 12개까지만 test 해봄.

2. 위 site에서 raid 5는 안전상의 이유로 사용하지 말라고 하더라. 사실상 raid5와 raid6과의 read 대비 write의 차이가 그리 크지 않다. raid5가 약 25% 정도의 효율이 나오고, raid6이 20% 정도의 효율이 나온다. disk가 많아지면 많아질 수록 이 격차는 좁아진다. 그러므로 raid 6을 쓰자.