Ovirt 3.6 install

Nested kvm 설정도 했으니 Ovirt 3.6 을 깔아보자.

일단 준비물.

1. server or ovirt 올릴 VM or pc

2. hostname 및 ip addres

3. domain name

4. iso file

Centos 7.iso

Window 2012r2.iso

5. internet도 되야함.

뭐 대충 이정도…? 더 있으면 쓰다가 추가함.ㅋ

Ovirt hosted engine 방식 으로 install 한다.

yum repository install

$ yum install http://resources.ovirt.org/pub/yum-repo/ovirt-release36.rpm

항상 뭘 하건 간에 log를 함께 보면서 하면 좋다. 난 message와 함께 vdsm log도 함께 본다

이렇게 byobu로 보면 좋음 ㅋ

다음부터 스크립트를 뜬것을 토대로 설명함.

byobu에 shift + F7 을 누르면 현재 창에서의 모든 history를 파일형태로 export 할 수 있다. 그냥 script 명령어로 스크립트를 뜨면 백스페이스던 뭐던 이상한 알아보기 힘들게 특수문자가 섞여 있는데 이건 그런거 없이 그냥 terminal 에 있는 것들만 내보낼 수 있음 ㅋ 좋음.

[root@hosted-engine ~]#

[root@hosted-engine ~]# yum install http://resources.ovirt.org/pub/yum-repo/ovirt-release36.rpm

### ovirt 3.6 repository install ##

Loaded plugins: fastestmirror, langpacks

[root@hosted-engine ~]# yum install -y epel-release

### epel repository install ###

[root@hosted-engine ~]# yum install -y ovirt-hosted-engine-setup screen system-storage-manager

### ovirt hosted-engine screen system-storage-manager install

screen은 행여 network 가 끊어질 것을 대비하여 ovirt manual 에서도 사용하는것을 권장 하고 있다.

system-storage-manager 는 linux LVM을 보다 편하게 쉽게 할 수 있는 툴이다. 짱임 ㅋ

Loaded plugins: fastestmirror, langpacks

epel/x86_64/metalink | 4.7 kB 00:00:00

epel | 4.3 kB 00:00:00

(1/3): epel/x86_64/group_gz | 169 kB 00:00:00

(2/3): epel/x86_64/updateinfo | 432 kB 00:00:00

(3/3): epel/x86_64/primary_db | 3.7 MB 00:00:00

Loading mirror speeds from cached hostfile

* base: data.nicehosting.co.kr

* epel: mirror.premi.st

* extras: data.nicehosting.co.kr

* ovirt-3.6: ftp.nluug.nl

* ovirt-3.6-epel: mirror.premi.st

* updates: data.nicehosting.co.kr

Resolving Dependencies

–> Running transaction check

—> Package ovirt-hosted-engine-setup.noarch 0:1.3.0-1.el7.centos will be installed

### 생략 ###

yum install -y ovirt-hosted-engine-setup screen system-storage-manager

### ovirt hosted-engine screen system-storage-manager install

screen은 행여 network 가 끊어질 것을 대비하여 ovirt manual 에서도 사용하는것을 권장 하고 있다.

system-storage-manager 는 linux LVM을 보다 편하게 쉽게 할 수 있는 툴이다. 짱임 ㅋ

Dependency Updated:

glusterfs.x86_64 0:3.7.6-1.el7 glusterfs-api.x86_64 0:3.7.6-1.el7 glusterfs-libs.x86_64 0:3.7.6-1.el7

Replaced:

qemu-img.x86_64 10:1.5.3-86.el7_1.8 qemu-kvm.x86_64 10:1.5.3-86.el7_1.8

qemu-kvm-common.x86_64 10:1.5.3-86.el7_1.8

Complete!

[root@hosted-engine ~]# vim /etc/hosts

### hosts file 수정 … FQND 방식으로 domain name 까지 붙여서 넣어주자. ###

예) 192.168.111.11 host1.test.dom host1

[root@hosted-engine ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.111.10 hosted-engine hosted-engine.test.dom

192.168.111.11 host1 host1.test.dom

192.168.111.12 ovirt-engine engine engine.test.dom

[root@hosted-engine ~]# scp /etc/hosts host1:/etc/

### host1 의 hosts 파일도 같게 맞춰 주자 ###

The authenticity of host ‘host1 (192.168.111.11)’ can’t be established.

ECDSA key fingerprint is be:54:41:2d:27:a0:00:a0:54:e6:42:c7:1a:69:b7:d0.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added ‘host1,192.168.111.11’ (ECDSA) to the list of known hosts.

root@host1’s password:

hosts 100% 253 0.3KB/s 00:00

[root@hosted-engine ~]# date

### time 확인 ### 독립된 환경에서는 ntp server를 따로 올려줘야 한다. 이건 나중에 포스팅 ㅋ ###

2015. 12. 15. (화) 02:46:24 KST

[root@hosted-engine ~]# timedatectl

### rhel7 부터는 시간을 이렇게 고급적이게 확인 할 수있다. ㅋㅋㅋ ###

Local time: 화 2015-12-15 02:46:30 KST

Universal time: 월 2015-12-14 17:46:30 UTC

RTC time: 월 2015-12-14 17:46:30

Timezone: Asia/Seoul (KST, +0900)

NTP enabled: yes

NTP synchronized: yes

RTC in local TZ: no

DST active: n/a

[root@hosted-engine ~]# pwd

/root

[root@hosted-engine ~]# df

Filesystem 1K-blocks Used Available Use% Mounted on

/dev/mapper/ssm-root 41922560 4172320 37750240 10% /

devtmpfs 8124500 0 8124500 0% /dev

tmpfs 8134132 80 8134052 1% /dev/shm

tmpfs 8134132 9084 8125048 1% /run

tmpfs 8134132 0 8134132 0% /sys/fs/cgroup

/dev/vda1 508588 171760 336828 34% /boot

[root@hosted-engine ~]# cd /etc/exports.d/

[root@hosted-engine exports.d]# ls

[root@hosted-engine exports.d]#

[root@hosted-engine exports.d]#

[root@hosted-engine exports.d]# vim ovirt36.exports

### nfs 설정 해준다. ###

/engine *(rw)

[root@hosted-engine exports.d]# ls

ovirt36.exports

[root@hosted-engine exports.d]# pwd

/etc/exports.d

[root@hosted-engine exports.d]#

[root@hosted-engine exports.d]#

[root@hosted-engine exports.d]# mkdir /engine

[root@hosted-engine exports.d]# pwd

/etc/exports.d

[root@hosted-engine exports.d]# df

Filesystem 1K-blocks Used Available Use% Mounted on

/dev/mapper/ssm-root 41922560 4172440 37750120 10% /

devtmpfs 8124500 0 8124500 0% /dev

tmpfs 8134132 80 8134052 1% /dev/shm

tmpfs 8134132 9060 8125072 1% /run

tmpfs 8134132 0 8134132 0% /sys/fs/cgroup

/dev/vda1 508588 171760 336828 34% /boot

[root@hosted-engine exports.d]# ssm list

### LVM 확인 … 없으니까 파티션을 만들어 주자. ###

———————————————————-

Device Free Used Total Pool Mount point

———————————————————-

/dev/vda 100.00 GB PARTITIONED

/dev/vda1 500.00 MB /boot

/dev/vda2 4.00 MB 44.00 GB 44.00 GB ssm

———————————————————-

————————————————

Pool Type Devices Free Used Total

————————————————

ssm lvm 1 4.00 MB 44.00 GB 44.00 GB

————————————————

——————————————————————————–

Volume Pool Volume size FS FS size Free Type Mount point

——————————————————————————–

/dev/ssm/root ssm 40.00 GB xfs 39.98 GB 36.20 GB linear /

/dev/ssm/swap ssm 4.00 GB linear

/dev/vda1 500.00 MB xfs 496.67 MB 328.96 MB part /boot

——————————————————————————–

[root@hosted-engine exports.d]# fdisk /dev/vda

Welcome to fdisk (util-linux 2.23.2).

Changes will remain in memory only, until you decide to write them.

Be careful before using the write command.

Command (m for help): p

Disk /dev/vda: 107.4 GB, 107374182400 bytes, 209715200 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk label type: dos

Disk identifier: 0x000b435d

Device Boot Start End Blocks Id System

/dev/vda1 * 2048 1026047 512000 83 Linux

/dev/vda2 1026048 93306879 46140416 8e Linux LVM

Command (m for help): n

Partition type:

p primary (2 primary, 0 extended, 2 free)

e extended

Select (default p):

Using default response p

Partition number (3,4, default 3):

First sector (93306880-209715199, default 93306880):

Using default value 93306880

Last sector, +sectors or +size{K,M,G} (93306880-209715199, default 209715199):

Using default value 209715199

Partition 3 of type Linux and of size 55.5 GiB is set

Command (m for help):

Command (m for help):

Command (m for help): p

Disk /dev/vda: 107.4 GB, 107374182400 bytes, 209715200 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk label type: dos

Disk identifier: 0x000b435d

Device Boot Start End Blocks Id System

/dev/vda1 * 2048 1026047 512000 83 Linux

/dev/vda2 1026048 93306879 46140416 8e Linux LVM

/dev/vda3 93306880 209715199 58204160 83 Linux

Command (m for help): p

Disk /dev/vda: 107.4 GB, 107374182400 bytes, 209715200 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk label type: dos

Disk identifier: 0x000b435d

Device Boot Start End Blocks Id System

/dev/vda1 * 2048 1026047 512000 83 Linux

/dev/vda2 1026048 93306879 46140416 8e Linux LVM

/dev/vda3 93306880 209715199 58204160 83 Linux

Command (m for help): w

The partition table has been altered!

Calling ioctl() to re-read partition table.

WARNING: Re-reading the partition table failed with error 16: 장치나 자원이 동작 중.

The kernel still uses the old table. The new table will be used at

the next reboot or after you run partprobe(8) or kpartx(8)

Syncing disks.

[root@hosted-engine exports.d]#

[root@hosted-engine exports.d]#

[root@hosted-engine exports.d]#

[root@hosted-engine exports.d]#

[root@hosted-engine exports.d]# partprobe

### 현재 수정한 disk 가 root 파티션을 마운트 하고 있으면 이 명령어로 kernel 이 새로운 table 을 가질 수 있게 해준다. ###

[root@hosted-engine exports.d]# ssm list

———————————————————-

Device Free Used Total Pool Mount point

———————————————————-

/dev/vda 100.00 GB PARTITIONED

/dev/vda1 500.00 MB /boot

/dev/vda2 4.00 MB 44.00 GB 44.00 GB ssm

/dev/vda3 55.51 GB

———————————————————-

————————————————

Pool Type Devices Free Used Total

————————————————

ssm lvm 1 4.00 MB 44.00 GB 44.00 GB

————————————————

——————————————————————————–

Volume Pool Volume size FS FS size Free Type Mount point

——————————————————————————–

/dev/ssm/root ssm 40.00 GB xfs 39.98 GB 35.94 GB linear /

/dev/ssm/swap ssm 4.00 GB linear

/dev/vda1 500.00 MB xfs 496.67 MB 328.96 MB part /boot

——————————————————————————–

[root@hosted-engine exports.d]# ssm add -p ssm /dev/vda3

### PV 추가 해준다. ###

Physical volume “/dev/vda3” successfully created

Volume group “ssm” successfully extended

[root@hosted-engine exports.d]# ssm create -p ssm -n engine -s 30g –fs xfs

### LV 를 만든다. ###

Logical volume “engine” created.

meta-data=/dev/ssm/engine isize=256 agcount=4, agsize=1966080 blks

= sectsz=512 attr=2, projid32bit=1

= crc=0 finobt=0

data = bsize=4096 blocks=7864320, imaxpct=25

= sunit=0 swidth=0 blks

naming =version 2 bsize=4096 ascii-ci=0 ftype=0

log =internal log bsize=4096 blocks=3840, version=2

= sectsz=512 sunit=0 blks, lazy-count=1

realtime =none extsz=4096 blocks=0, rtextents=0

[root@hosted-engine exports.d]# ssm list

### 30GB 의 engine 이라는 이름의 볼륨이 만들어 진것을 확인 할 수 있다. ###

———————————————————–

Device Free Used Total Pool Mount point

———————————————————–

/dev/vda 100.00 GB PARTITIONED

/dev/vda1 500.00 MB /boot

/dev/vda2 4.00 MB 44.00 GB 44.00 GB ssm

/dev/vda3 25.50 GB 30.00 GB 55.51 GB ssm

———————————————————–

————————————————-

Pool Type Devices Free Used Total

————————————————-

ssm lvm 2 25.51 GB 74.00 GB 99.50 GB

————————————————-

———————————————————————————-

Volume Pool Volume size FS FS size Free Type Mount point

———————————————————————————-

/dev/ssm/root ssm 40.00 GB xfs 39.98 GB 35.94 GB linear /

/dev/ssm/swap ssm 4.00 GB linear

/dev/ssm/engine ssm 30.00 GB xfs 29.99 GB 29.99 GB linear

/dev/vda1 500.00 MB xfs 496.67 MB 328.96 MB part /boot

———————————————————————————-

[root@hosted-engine exports.d]# blkid /dev/ssm/engine

### /etc/fstab 에 영구적으로 마운트 해줄 때는 꼭 block ID 로 해주자. ###

/dev/ssm/engine: UUID=”aa7eb35f-e496-4ecf-aa1e-b5cd7da1f4c8″ TYPE=”xfs”

[root@hosted-engine exports.d]# !! >> /etc/fstab

blkid /dev/ssm/engine >> /etc/fstab

[root@hosted-engine exports.d]# vim /etc/fstab

[root@hosted-engine exports.d]# mount -a

[root@hosted-engine exports.d]# df

### mount 가 잘 되엇는지 확인. ###

Filesystem 1K-blocks Used Available Use% Mounted on

/dev/mapper/ssm-root 41922560 4172448 37750112 10% /

devtmpfs 8124500 0 8124500 0% /dev

tmpfs 8134132 80 8134052 1% /dev/shm

tmpfs 8134132 9076 8125056 1% /run

tmpfs 8134132 0 8134132 0% /sys/fs/cgroup

/dev/vda1 508588 171760 336828 34% /boot

/dev/mapper/ssm-engine 31441920 32928 31408992 1% /engine

[root@hosted-engine exports.d]# ssm list

———————————————————–

Device Free Used Total Pool Mount point

———————————————————–

/dev/vda 100.00 GB PARTITIONED

/dev/vda1 500.00 MB /boot

/dev/vda2 4.00 MB 44.00 GB 44.00 GB ssm

/dev/vda3 25.50 GB 30.00 GB 55.51 GB ssm

———————————————————–

————————————————-

Pool Type Devices Free Used Total

————————————————-

ssm lvm 2 25.51 GB 74.00 GB 99.50 GB

————————————————-

———————————————————————————-

Volume Pool Volume size FS FS size Free Type Mount point

———————————————————————————-

/dev/ssm/root ssm 40.00 GB xfs 39.98 GB 35.94 GB linear /

/dev/ssm/swap ssm 4.00 GB linear

/dev/ssm/engine ssm 30.00 GB xfs 29.99 GB 29.99 GB linear /engine

/dev/vda1 500.00 MB xfs 496.67 MB 328.96 MB part /boot

———————————————————————————-

[root@hosted-engine exports.d]# pwd

/etc/exports.d

[root@hosted-engine exports.d]# systemctl status nfs-server

nfs-server.service – NFS server and services

Loaded: loaded (/usr/lib/systemd/system/nfs-server.service; disabled)

Active: inactive (dead)

[root@hosted-engine exports.d]# systemctl restart nfs-server

### 준비 다 되엇으면 nfs server를 올려준다. ###

[root@hosted-engine exports.d]# systemctl enable nfs-server

### 재부팅 시 자동적으로 daemon 가동 되게끔 설정 한다. ###

ln -s ‘/usr/lib/systemd/system/nfs-server.service’ ‘/etc/systemd/system/multi-user.target.wants/nfs-serve

r.service’

[root@hosted-engine exports.d]# exportfs

### nfs share 확인 ###

/engine <world>

[root@hosted-engine exports.d]# showmount -e

Export list for hosted-engine:

/engine *

[root@hosted-engine exports.d]#

[root@hosted-engine exports.d]# screen -S lee

###screen 을 띄운다. ###

[root@hosted-engine ~]# hosted-engine –deploy

### hosted-engine deploy ###

[ INFO ] Stage: Initializing

[ INFO ] Generating a temporary VNC password.

[ INFO ] Stage: Environment setup

Continuing will configure this host for serving as hypervisor and create a VM where you have to

install oVirt Engine afterwards.

Are you sure you want to continue? (Yes, No)[Yes]:

### 별거없다 그냥 yes ###

Configuration files: []

Log file: /var/log/ovirt-hosted-engine-setup/ovirt-hosted-engine-setup-20151215030400-5sehtn.lo

g

Version: otopi-1.4.0 (otopi-1.4.0-1.el7.centos)

[ INFO ] Hardware supports virtualization

[ INFO ] Stage: Environment packages setup

[ INFO ] Stage: Programs detection

[ INFO ] Stage: Environment setup

[ INFO ] Stage: Environment customization

–== STORAGE CONFIGURATION ==–

During customization use CTRL-D to abort.

Please specify the storage you would like to use (glusterfs, iscsi, fc, nfs3, nfs4)[nfs3]: nfs4

### 우리는 성능이 더 좋은 nfs4 로 쓸거다. rhel7 nfs server 가 share해주는 기본이 nfs 4임 ###

Please specify the full shared storage connection path to use (example: host:/path): hosted-eng

ine:/engine

### 아까 share 해준 nfs volume을 여기서 쓴다. ###

[ INFO ] Installing on first host

Please provide storage domain name. [hosted_storage]:

### storage domain name 인데 그냥 기본값 ㄱㄱ###

Local storage datacenter name is an internal name

and currently will not be shown in engine’s admin UI.

Please enter local datacenter name [hosted_datacenter]:

### 기본값 ㄱㄱ ###

–== SYSTEM CONFIGURATION ==–

–== NETWORK CONFIGURATION ==–

Please indicate a nic to set ovirtmgmt bridge on: (ens8) [ens8]:

### 이건 가끔 bonding 같은거 해줫을때 다르게 나올 수 도 있으니 잘 보고 하자 ovirtmgmt 브릿지를 만들 물리 디바이스로 뭘 쓸 지 묻고 있다. ###

iptables was detected on your computer, do you wish setup to configure it? (Yes, No)[Yes]:

### iptables setting을 자동으로 해준단다. 우리가 하면 손이 아프니 ovirt에 맡기자. ###

Please indicate a pingable gateway IP address [192.168.111.1]:

### gateway ip address ###

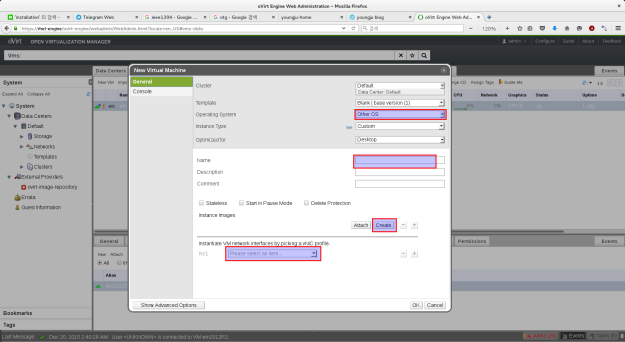

–== VM CONFIGURATION ==–

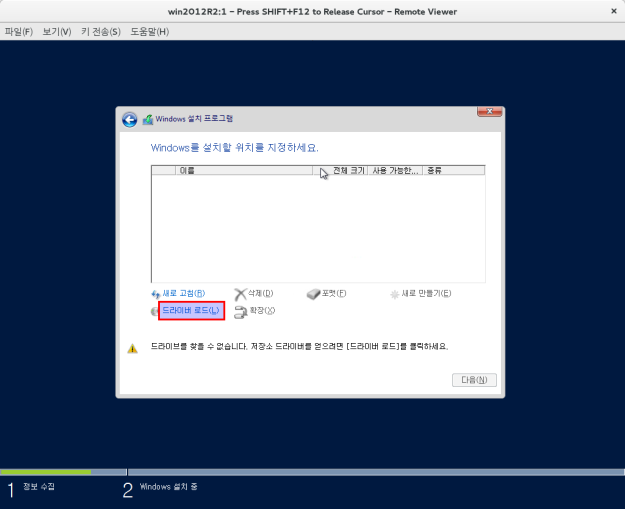

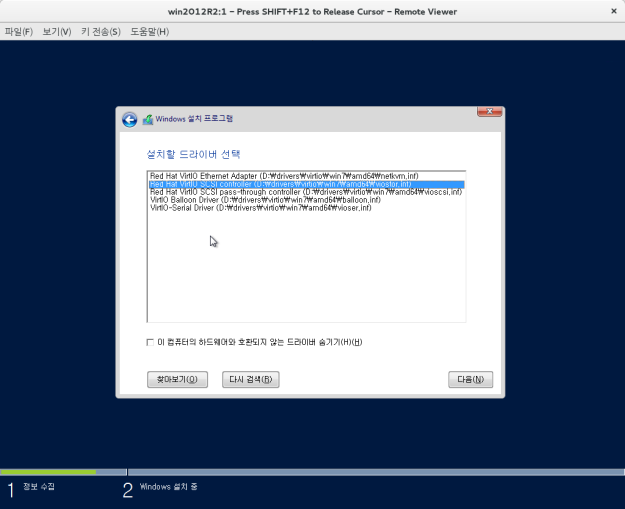

Please specify the device to boot the VM from (choose disk for the oVirt engine appliance)

(cdrom, disk, pxe) [disk]: cdrom

### cdrom image 를 가지고 인스톨 할거임 ###

Please specify an alias for the Hosted Engine image [hosted_engine]:

### 그냥 기본값 ###

The following CPU types are supported by this host:

– model_Westmere: Intel Westmere Family

– model_Nehalem: Intel Nehalem Family

– model_Penryn: Intel Penryn Family

– model_Conroe: Intel Conroe Family

Please specify the CPU type to be used by the VM [model_Westmere]:

### cpu type 물어보는 건데 나중에 클러스터 묶을 때 필요한 값이다. 궂이 지금 쓰고 있는 cpu모델과 맞춰줄 필요는 없다. 그냥 기본 값으로 ㄱㄱ ###

Please specify path to installation media you would like to use [None]: /iso/CentOS-7-x86_64-DV

D-1503-01.iso

### cd iso 파일 경로를 넣어 준다. ###

Please specify the number of virtual CPUs for the VM [Defaults to minimum requirement: 2]: 4

### 사용 할 vm cpu ###

Please specify the disk size of the VM in GB [Defaults to minimum requirement: 25]:

### 아까 30GB 바이트를 만들엇으니 25GB로 만들자. 30GB volume이라고 30GB 다 쓰면 안된다. 안에 matadata 영역이 있어서 다 쓰면 안됨. 그래봣자 몇 MB 안될테지만 그래도 널널하게 대인배 답게 5GB 정도 비워두자. ###

You may specify a unicast MAC address for the VM or accept a randomly generated default [00:16:

3e:68:97:c4]:

### mac address 인데 기본값 으로 하자 ###

Please specify the memory size of the VM in MB [Defaults to minimum requirement: 4096]: 8192

### 메모리인데 권장은 16GB 이다. 여기선 8GB ###

Please specify the console type you would like to use to connect to the VM (vnc, spice) [vnc]:

### 화면전송 프로토콜인데 옛날에 spice로 했다가 뭔가 안됏던 기억이 있어서 난 그냥 vnc로 ^ㅅ^ 어차피 인스톨 말고 거의 안쓴다. ###

–== HOSTED ENGINE CONFIGURATION ==–

Enter the name which will be used to identify this host inside the Administrator Portal [hosted

_engine_1]:

### admin potal 에서 보여질 hosted-engine 물리서버 이름이다. 이거 2중화 할거면 바꾸면 안된다. 기본값 ㄱㄱ ###

Enter ‘admin@internal’ user password that will be used for accessing the Administrator Portal:

### admin portal 에 들어갈때 쓸 암호. 당연히 나중에 바꿀 수 있음. ###

Confirm ‘admin@internal’ user password:

Please provide the FQDN for the engine you would like to use.

This needs to match the FQDN that you will use for the engine installation within the VM.

Note: This will be the FQDN of the VM you are now going to create,

it should not point to the base host or to any other existing machine.

Engine FQDN: engine.test.dom

### engine 의 FQND 포함한 이름 dns에 등록이 되어 있거나 hosts 파일에 들어가 있어야 한다. ###

[WARNING] Failed to resolve engine.test.dom using DNS, it can be resolved only locally

Please provide the name of the SMTP server through which we will send notifications [localhost]

### 통지 메일 보내줄건데 어디를 통해서 보내줄지 묻는것 기본값으로 하거나 갖고 있는 메일서버로 지정하면 되겟다. ###

:

Please provide the TCP port number of the SMTP server [25]:

###포트는 뭐쓰는지 묻는다 기본값 ###

Please provide the email address from which notifications will be sent [root@localhost]:

### 메일을 어떤 계정 어떤 서버에서 보내줄 지 묻고 있다. ###

Please provide a comma-separated list of email addresses which will get notifications [root@loc

alhost]:

### 메일을 누구한테 보낼지 묻고 있다. ###

[ INFO ] Stage: Setup validation

[WARNING] Failed to resolve hosted-engine.test.dom using DNS, it can be resolved only locally

–== CONFIGURATION PREVIEW ==–

Bridge interface : ens8

Engine FQDN : engine.test.dom

Bridge name : ovirtmgmt

SSH daemon port : 22

Firewall manager : iptables

Gateway address : 192.168.111.1

Host name for web application : hosted_engine_1

Host ID : 1

Image alias : hosted_engine

Image size GB : 25

GlusterFS Share Name : hosted_engine_glusterfs

GlusterFS Brick Provisioning : False

Storage connection : hosted-engine:/engine

Console type : vnc

Memory size MB : 8192

MAC address : 00:16:3e:68:97:c4

Boot type : cdrom

Number of CPUs : 4

ISO image (cdrom boot/cloud-init) : /iso/CentOS-7-x86_64-DVD-1503-01.iso

CPU Type : model_Westmere

Please confirm installation settings (Yes, No)[Yes]:

### 확인 다 하고 yes 를 입력 ###

[ INFO ] Stage: Transaction setup

[ INFO ] Stage: Misc configuration

[ INFO ] Stage: Package installation

[ INFO ] Stage: Misc configuration

[ INFO ] Configuring libvirt

[ INFO ] Configuring VDSM

[ INFO ] Starting vdsmd

[ INFO ] Waiting for VDSM hardware info

[ INFO ] Configuring the management bridge

[ INFO ] Creating Storage Domain

[ INFO ] Creating Storage Pool

[ INFO ] Connecting Storage Pool

[ INFO ] Verifying sanlock lockspace initialization

[ INFO ] Creating VM Image

[ INFO ] Destroying Storage Pool

[ INFO ] Start monitoring domain

[ INFO ] Configuring VM

[ INFO ] Updating hosted-engine configuration

[ INFO ] Stage: Transaction commit

[ INFO ] Stage: Closing up

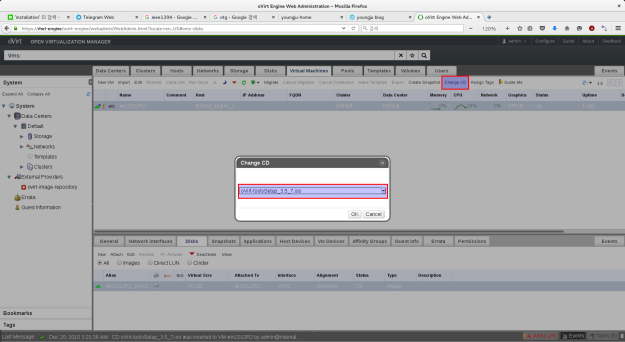

[ INFO ] Creating VM

You can now connect to the VM with the following command:

/bin/remote-viewer vnc://localhost:5900

### 이 커맨드로 engine의 vnc 화면을 띄운다. ###

Use temporary password “0061uUGe” to connect to vnc console.

### 위에 암호로 vnc 서버를 접속 할 수 있다. ###

Please note that in order to use remote-viewer you need to be able to run graphical application

s.

This means that if you are using ssh you have to supply the -Y flag (enables trusted X11 forwar

ding).

Otherwise you can run the command from a terminal in your preferred desktop environment.

If you cannot run graphical applications you can connect to the graphic console from another ho

st or connect to the serial console using the following command:

socat UNIX-CONNECT:/var/run/ovirt-vmconsole-console/841a1802-2166-4989-9b6d-9a5c403a47d1.sock,u

ser=ovirt-vmconsole STDIO,raw,echo=0,escape=1

If you need to reboot the VM you will need to start it manually using the command:

hosted-engine –vm-start

You can then set a temporary password using the command:

hosted-engine –add-console-password

### 이 커맨드를 입력하고 암호를 재지정 할 수 도 있다. ###

The VM has been started.

To continue please install OS and shutdown or reboot the VM.

Make a selection from the options below:

(1) Continue setup – OS installation is complete

(2) Power off and restart the VM

(3) Abort setup

(4) Destroy VM and abort setup

(1, 2, 3, 4)[1]:

### OS 인스톨이 다 끝나면 reboot 을 누르고 꺼진거 확인 후 1번 을 입력한다. ###

Please reboot or shutdown the VM.

Verifying shutdown…

[ INFO ] Creating VM

You can now connect to the VM with the following command:

/bin/remote-viewer vnc://localhost:5900

Use temporary password “0061uUGe” to connect to vnc console.

Please note that in order to use remote-viewer you need to be able to run graphical application

s.

This means that if you are using ssh you have to supply the -Y flag (enables trusted X11 forwar

ding).

Otherwise you can run the command from a terminal in your preferred desktop environment.

If you cannot run graphical applications you can connect to the graphic console from another ho

st or connect to the serial console using the following command:

socat UNIX-CONNECT:/var/run/ovirt-vmconsole-console/841a1802-2166-4989-9b6d-9a5c403a47d1.sock,u

ser=ovirt-vmconsole STDIO,raw,echo=0,escape=1

If you need to reboot the VM you will need to start it manually using the command:

hosted-engine –vm-start

You can then set a temporary password using the command:

hosted-engine –add-console-password

Please install and setup the engine in the VM.

You may also be interested in installing ovirt-guest-agent-common package in the VM.

The VM has been rebooted.

To continue please install oVirt-Engine in the VM

(Follow http://www.ovirt.org/Quick_Start_Guide for more info).

Make a selection from the options below:

(1) Continue setup – oVirt-Engine installation is ready and ovirt-engine service is up

### ovirt engine install 이 다 끝나면 1을 입력 해주자. ###

(2) Power off and restart the VM

### 리붓할 때는 2를 입력 ###

(3) Abort setup

### install 취소 할때는 3 입력. 이것보다 4를 입력 하는게 더 좋다. ###

(4) Destroy VM and abort setup

### install 취소 및 vm 없애는 건데 그냥 3을 입력하면 vm 찌꺼기가 남기 때문에 다시 인스톨 하려고 하면 erro

r messagem가 뜨면서 안된다 4를 입력 하면 다시 인스톨 할 때 잘됨. ###

### 암턴 3은 입력 하지 마라. ###

(1, 2, 3, 4)[1]:

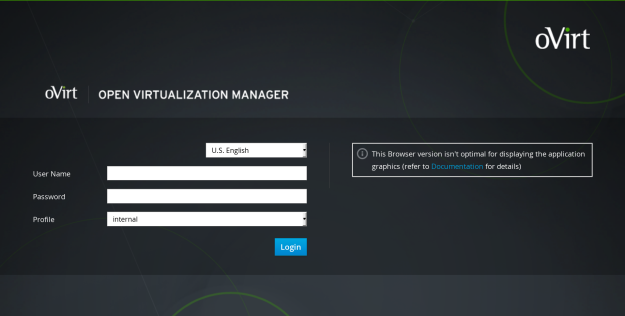

이 후는 engine install 임.

vm 위에 vm이라 더럽게 느림…ㅠ 오래 걸렷다…

2부에 계속….